I learnt recently from an old friend that there's a formula economists have been using since 1928 that quietly governs how every dollar of GDP gets split between the people who own things and the people who do things.

It's called the Cobb-Douglas production function. It lets us measure how, as a society, we're progressing economically. And recently, it's started to unravel.

What's Cobb-Douglas and why should you care?

To produce anything of value, you need 3 ingredients:

Technology (the knowledge and methods you're working with), Capital (machines, software, buildings, tools) and Labour (people doing the work).

If you multiply them together in the right proportions and you get total economic output. That's the formula that underpins our GDP calculations, wage theory, tax policy. As a formula it's more or less the entire operating system of modern economies.

In our world, labour and capital are supposed to be complementary. IE you need both. A truck without a driver is... a parked truck. A spreadsheet without someone reading it is just a grid on a screen. You get the idea.

And it's this complementary relationship that's the reason wages exist. Capital gets deployed, labour utilises the machines, software, tools etc and economic output occurs. It's the engine behind the whole loop of work, earn, spend and grow that keeps everything turning.

Quick caveat. The Austrian economists (Mises, Rothbard, Hayek) would say Cobb-Douglas was always a blunt instrument. It treats capital as one homogeneous lump when every piece of capital is actually specific, time-bound and can't be smoothly swapped for labour.

They're probably right about the limitations. But the trend lines it tracks (labour share declining, output decoupling from employment) are showing up in the real data regardless.

In thinking about the collapse of knowledge work with the impact of AI recently, I think we're watching this function fall apart in real time.

AI now substitutes where technology previously amplified

For most of economic history, any new technology made workers more productive. The Gutenberg press empowered the world through knowledge by lowering the cost of production of books dramatically. Excel allows accountants to work with 10x the clients by doing all the heavy lifting. Technology, generally speaking, always amplifies human labour. It makes the L in the equation more valuable. But I've realised that AI does something vastly different based on what I'm now seeing. It's a labour substitute.

It substitutes human effort. Early on it substituted writing, ChatGPT would write the first draft and it wasn't perfect, but it still saved a lot of time. Then its capability expanded into imagery and photography, substituting the traditionally required human labour. And today, an AI agent can entirely handle a customer support ticket from open to close without a human even touching it.

We now have these AI workers that don't need a person, that as of March 2026 can complete 14 hours of human work from one prompt.

I've been creating digital products for around 20 years and I'll be honest, the speed of this shift is catching me off guard. Things I assumed were a long way out are happening now.

The labour numbers are already shifting

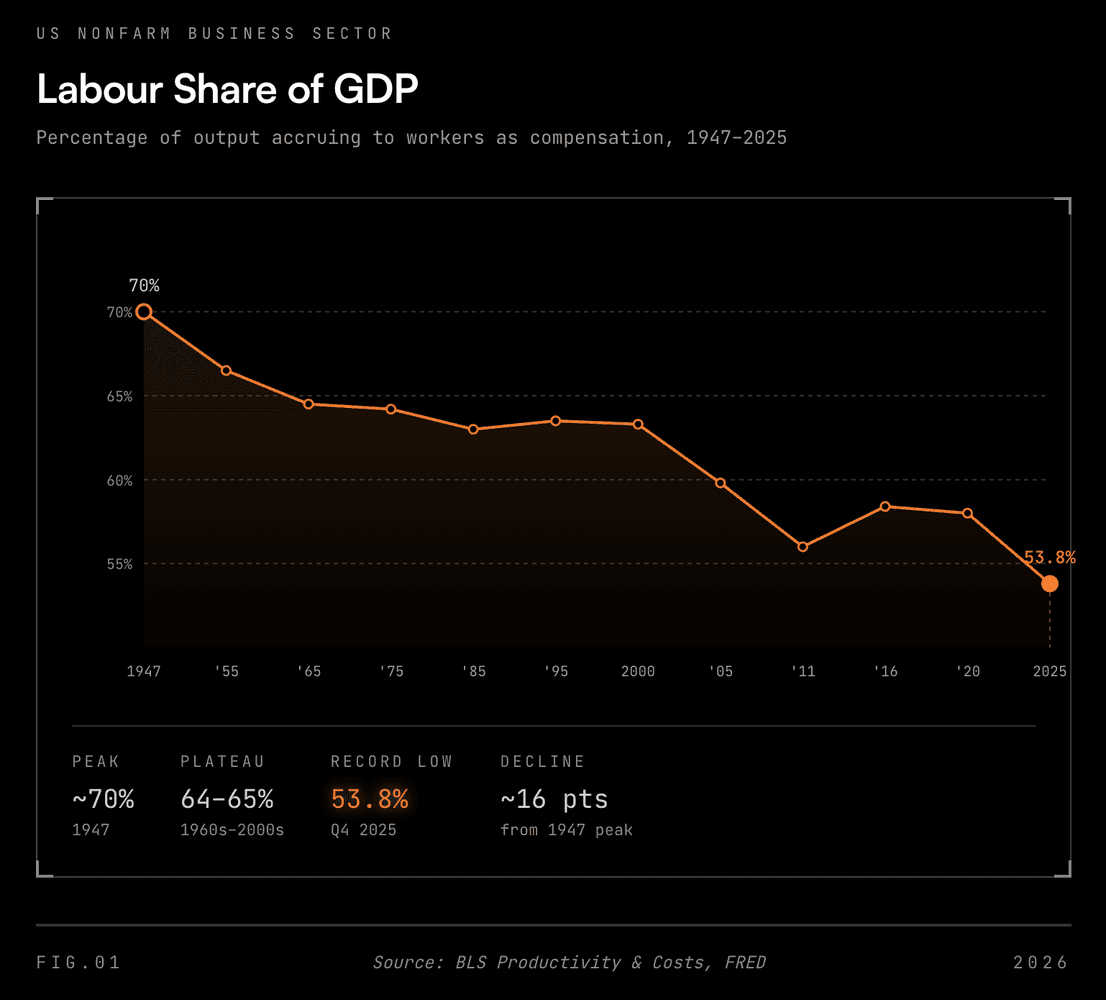

Labour's share of GDP in the US has been declining for decades. It started at around 70 percent in 1947 when the Bureau of Labor Statistics began tracking it, then settled into a range of 64 to 65 percent from the 1960s onward. Apologies for the US centric data, but it was easier to find and the pattern is similar globally.

In Q4 2025, it hit 53.8 percent. The lowest level on record in data going back to 1947, according to the Bureau of Labor Statistics. From the post-1960s plateau, that's a drop of roughly 11 percentage points. From the 1947 starting point, it's closer to 16. Either way, trillions of dollars that used to flow to workers now flows to capital owners, more on the centralisation to capital owners soon…

That trend was already happening before generative AI. Before ChatGPT. Before any of this.

But it would appear the explosion agentic AI is pouring rocket fuel on it. Let's see what happens.

The jobless boom is already here

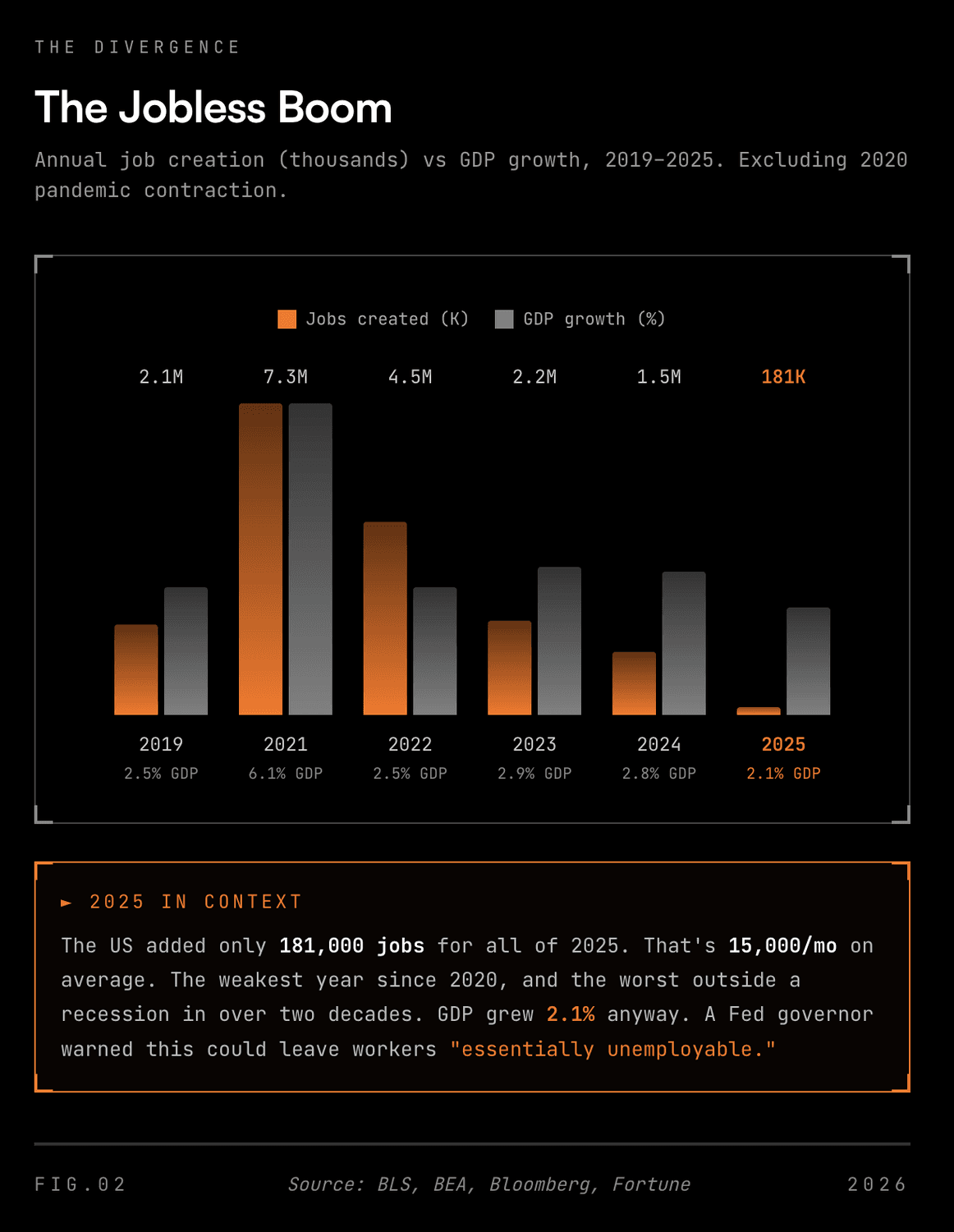

In February 2026, Bloomberg coined the term "jobless boom". It's the most important phrase in economics right now.

The US economy grew 2.1% in 2025 while only 181,000 jobs were created for the entire year. That's 15,000 per month. The weakest year for hiring since the pandemic year of 2020, and the worst outside a recession in over two decades.

The economy expanded and wealth was created. But jobs weren't.

Fed Governor Michael Barr laid out 3 scenarios for how AI reshapes the labour market this month. In his "rapid growth" scenario, he said the following:

Layoffs soar, leading to widespread unemployment in the short run and declines in labor force participation over time, as a large share of the population is essentially unemployable

A sitting Fed governor put that on the record. In February 2026. Worth noting, he also said the current data aligns more closely with a "gradual adoption" scenario. But the fact he felt the need to articulate the extreme version publicly says something.

Oxford Economics expects job gains to average less than 40,000 per month through 2026 while GDP keeps growing. Newsweek called it "The Great Decoupling". NYU Stern economist Lawrence White said:

We're building a system that runs faster than it employs. That's a long-term shift, not a one-year blip.

The Cobb-Douglas equation is breaking in front of us. Output is going up. But labour's contribution is going down. Capital (increasingly AI systems) is picking up the slack.

A counterargument. Software engineering roles are rising.

Citadel Securities published a rebuttal in February with actual data. Software engineering postings are up 11% year on year. If AI were already replacing knowledge workers at scale, you'd expect those to crater first.

The point they're making relates to a prediction by Keynes in 1930 about the 15-hour workweek. Productivity grew exactly as he expected. But people just invented new things to want.

Citadel argues AI will do the same. New industries, new demand and new jobs we can't imagine yet. I think they're probably right that the displacement won't be instant.

But, my gut read is that the 11% jump in software postings likely reflects the AI buildout itself (someone has to wire all of this together). It feels like a temporary demand spike dressed up as a trend.

The optimistic version is that as the cost of software comes down, demand explodes and engineers who adopt AI become more valuable. It happened with music. You used to need a recording studio and $500k. Then laptop production tools created an explosion of music and the bedroom producers won. I'd like to think the same pattern holds for software.

But the counterpoint to this is that the labour share decline is a long term trend. There's the odd spike with hiring sprees but overall the trend is down.

And previous technology waves were slowed by integration pain. You had to retrofit systems, retrain staff, rewire processes. This generation of AI tools skips that. They plug into existing systems, run workflows and operate across software without a human configuring every step.

Friction slows adoption. But the thing being adopted is specifically designed to remove friction.

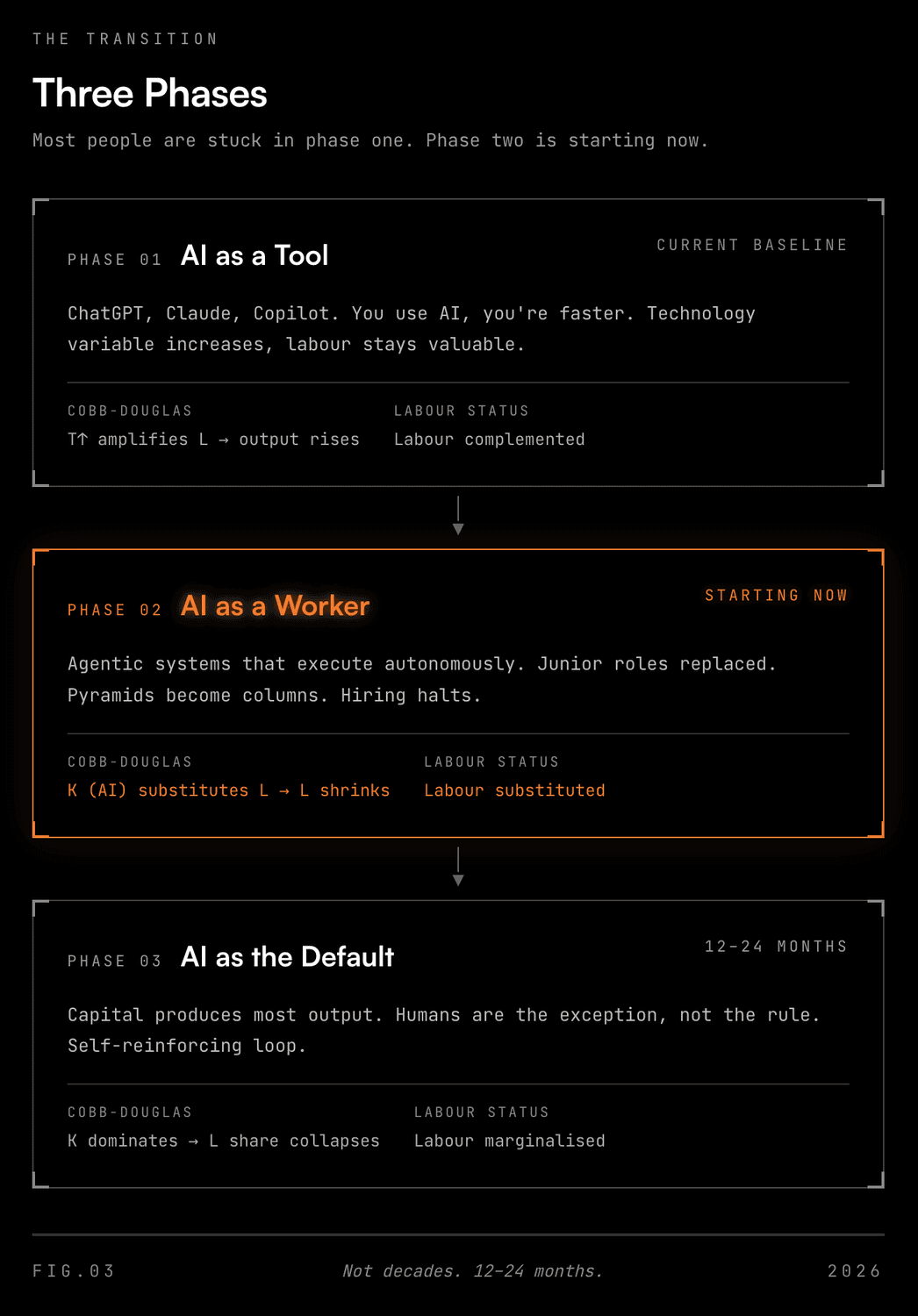

Three phases. Most people are stuck in phase one

Based on what I'm seeing I think this plays out over the next 12 to 24 months. Not decades.

Phase one is where most of my LinkedIn feed is at right now. AI as a tool. You use ChatGPT, you're faster. You use Claude Code, you ship more products.

Cobb-Douglas still basically works here because the AI boosts the technology variable, which makes your labour more valuable. This is the "learn AI or get left behind" crowd. Definitely valid, but already old news.

Phase two is starting now. AI as a worker. Agentic systems that execute autonomously.

When a finance company can deploy an AI agent that handles 80% of what a junior analyst does, the demand for junior analysts drops. The Fed's own Beige Book has noted firms replacing entry-level positions with AI or making existing workers productive enough to halt hiring. The labour variable in that equation starts shrinking.

Andrew Yang described it well recently: hiring used to look like pyramids (3 juniors for every senior) and now it's columns (1 senior, maybe 1 junior, done). His own company stopped hiring junior engineers because the CTO said the AI tools made the role unnecessary. And it's compounded by the fact that if you're young and looking for a career, why would you choose something that AI can do for dollars? Less people applying for certain roles, less roles being offered.

Phase three is the one nobody wants to believe is coming. AI as the default with humans as the exception.

Capital (which now includes AI infrastructure, compute and training data) produces most of the economic output. The labour share then drops further. It would appear to be a self reinforcing loop, and not the good kind.

So if this plays out what breaks?

Well, it'll be everything downstream of the assumption that most adults trade their time for money.

Think tax systems, funded by income tax, which requires incomes. Healthcare tied to employment in the US. Retirement funds built on decades of wage contributions. Consumer spending (roughly 70% of GDP), powered by people earning and then spending.

If the L in Cobb-Douglas shrinks fast enough, we'll see the entire chain wobble. Who buys the products if fewer people are earning? Where does government revenue come from if wages are flat or falling? How do you fund a social safety net when the thing it's built on (widespread employment) is eroding?

Some believe that AI will aid the situation by dramatically reducing the cost of production. So we'll earn less but everything will cost less. A noble idea, but that won't happen overnight.

Past that I don't have an answer. I'm not sure anyone does yet. But there are ideas.

What happens next

UBI. Universal basic income. It's a popular idea… free money! But it's something that polls well in theory, but could be disastrous if not implemented correctly.

In practice, let's say we pay every Australian adult $26,000 a year (which is barely above the poverty line). This would cost over $520 billion, or roughly 25% of our GDP. We're already running a $42 billion deficit in 2025-26 (since revised down to $36.8 billion at MYEFO) with Commonwealth net debt approaching $600 billion. UBI in this format is unsustainable. It'd be funded through more debt and would lead to economic collapse.

We've already seen what unconditional cash does to an economy. The pandemic-era stimulus pumped spending without production behind it and we ended up with inflation.

UBI also breaks the feedback loop between work and income. If people get paid regardless, fewer go looking for work. The longer someone's out, the harder it is to get back in. Skills decay, confidence drops, and the gap on the resume grows.

So I think UBI in this form is bad for the individual, but it also hands enormous power to whoever controls the payments. We saw this with the Robodebt disaster. Canberra got too much power over who gets paid and who doesn't and it went badly. UBI just scales that dynamic. The government deciding how much, when and on what terms gets delivered to whom shouldn't be our go to market strategy for this.

I think the more interesting models are the ones that redistribute ownership of GDP growth rather than just income.

Sam Altman proposed something called the American Equity Fund back in 2021. Tax companies above a certain valuation 2.5% of their market value per year that's paid in shares (not cash) and tax 2.5% of all privately held land, paid in dollars. Both flow into a national fund.

Every citizen over 18 gets an annual distribution in dollars and company shares. His estimate: roughly $13,500 per adult per year within a decade, rising as GDP grows. For the US, but the concept scales here too.

The public receives equity, not allowance. Your payout scales with the economy's performance.

UBD. But I think there's a better version of this. Yanis Varoufakis takes it further with what he calls a Universal Basic Dividend. Public trusts hold shares in corporations benefiting from AI and automation. Dividends from those shares flow to the public.

It's distinctively different from UBI. It amounts to direct ownership in productive capital. Varoufakis's argument is that distributing ownership creates stakeholders. The incentive structure stays productive because the dividend only grows if the economy does.

There's also a third path emerging: direct philanthropy. Andrew Yang on a recent podcast discussed how Michael Dell committed $6.25 billion to children's savings accounts through the Invest America program, and suggests AI companies like Anthropic could similarly fund community initiatives and skip the government entirely.

Yang's argument is that billionaires won't trust the government to distribute it well, but they might fund it directly if they can see the impact. It bypasses the centralisation issue altogether.

The barrier for me on all of this is whether any of these options are politically possible. My view is that in the short term no, it won't happen quick enough.

But the difference between UBI and UBD matters to me. A GDP-linked dividend says "here's your share of our nation's growth. You own it." It ties each citizen to collective productivity, gives people skin in the game and incentivises economic growth over debt driven handouts. I know which model I'd rather build on.

Leaving the worst till last… the version we want to avoid is where wealth concentrates to capital owners. This would see the middle-class hollowed out and lead to political instability. History has some uncomfortable precedents here. We should aim to avoid this.

So what's it all mean then?

If you're in knowledge work, it means you're in the crosshairs of phase two whether you see it coming or not.

The people who navigate this well won't just be "good at using AI tools." That's the new baseline. They'll be the ones who understand that when AI handles the expertise, the execution and increasingly the judgment, all that's left is accountability. Or put more simply… owning the outcome. You'll need to stand behind the work when the machine gets it wrong. I've shared my thoughts on this previously.

On the previously mentioned podcast, Andrew Yang puts it bluntly: the only career path you can rely on is entrepreneurism and owning your own future. But then he admitted that probably 80% of people aren't cut out for that. So where does that leave everyone else? The surviving skill is ownership of outcomes, but the system that trained everyone to be employees didn't prepare them for it.

I've been writing about this for a while now. The sewing machine didn't kill seamstresses, but it did destroy the ones who couldn't adapt to what sewing became. The same thing is happening to knowledge work, right now, in real time.

The uncomfortable question is can we manage this transition without things getting ugly? Do the people making decisions about it have any idea how fast the ground is shifting underneath them? I think the answer increasingly is becoming an obvious no and that we're heading into the unknown.