I've been thinking about a prediction from Peter Diamandis and Emad Mostaque: by 2026, AI will handle 90% of economically valuable cognitive tasks. Not 2036... This year. They called it "the complete collapse of traditional knowledge work economics." Huge claim I know. But if even half of it plays out, we need to pay attention to what it means for how we price our work.

Here's the bit that stuck with me: AI isn't coming for your judgment or your reputation. It's coming for your expertise… the knowledge you spent years accumulating. And if that becomes commoditised, what exactly are you selling?

Read on for why I think every professional services business needs to start thinking about insurance. Yes, I know, insurance.

The ‘Bundle’ Is Breaking

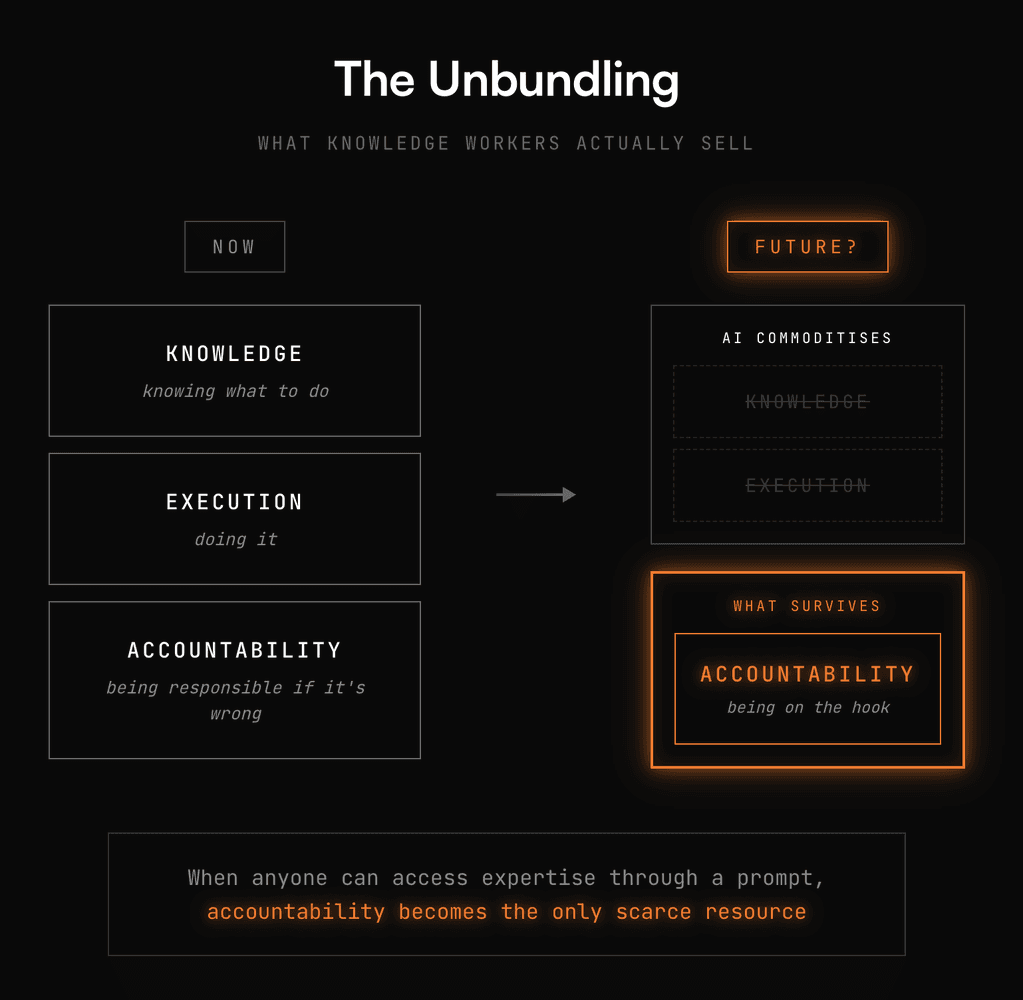

Every knowledge worker sells the same three things packaged together: knowledge (knowing what to do), execution (doing it), and accountability (being responsible if it's wrong). Lawyers sell this bundle. So do accountants, consultants, architects, developers, doctors. The whole knowledge economy runs on it.

AI is unbundling it. When anyone can access expertise through a prompt, knowledge stops being scarce. When AI can draft the contract, write the code, or generate the diagnosis, execution gets commoditised too. In that world one component survives: accountability. Someone still needs to stand behind the outcome. Someone still needs to be on the hook if it fails.

This Looks Familiar

I think people aren’t seeing this yet. But we already have an industry built entirely around accountability transfer. It's called insurance.

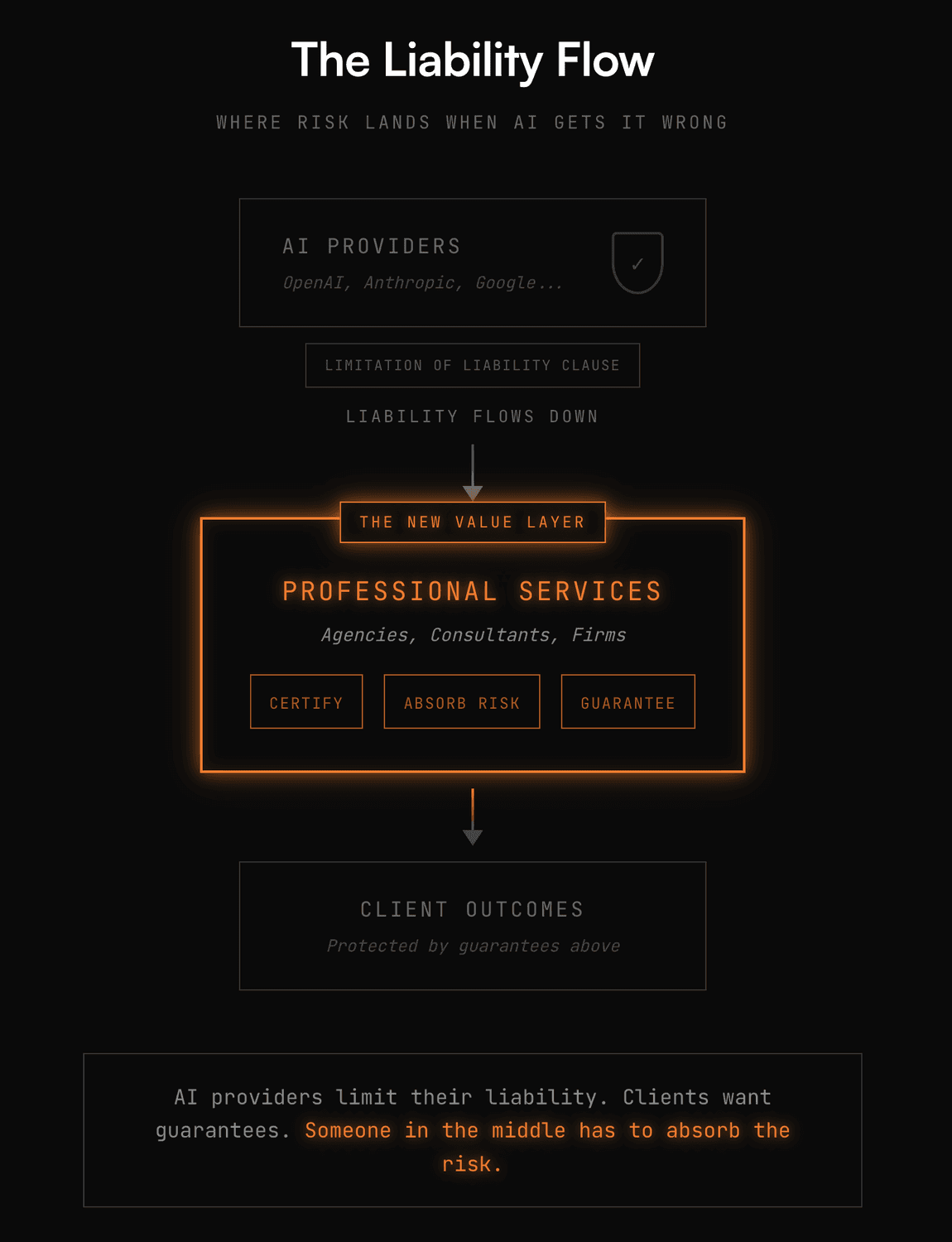

When you buy insurance, you're not paying for effort. You're paying for risk transfer, or someone else agrees to absorb the consequences if things go wrong. That's increasingly what knowledge work looks like. Clients won't pay you to build things. They'll pay you to guarantee outcomes, to certify that the generated effort meets specific standards, and to carry the liability when it doesn't.

Your value shifts from "I know how to do this" to "I'll stand behind this." The fee isn't for your time. It's a premium for absorbing their risk.

The Big Players Are Already Moving

Lloyd's of London (the 338 year old Lloyd’s) launched coverage in 2025 specifically for AI failures. A startup called Armilla now offers policies covering "hallucinations, model drift, and algorithmic errors." Read that again... You can now insure against your AI making things up.

The policy covers legal costs and damages when AI systems "fail to perform as intended, generate critical errors, hallucinations or inaccuracies leading to damages." Deloitte estimates this market will hit $4.7 billion by 2032, growing at 80% annually. Encouragingly, insurers aren't waiting to see if AI disrupts knowledge work, it seems they're already pricing the liability.

Check your own current indemnity policy. Does it explicitly cover AI-related errors? Insurers are starting to give this a name. They call this "Silent AI", the gap where nobody knows who's on the hook when the machine gets it wrong. What bothers me a little, but I understand, is that all of the frontier AI labs terms of use include a limitation of liability clause. If their AI gives bad advice and you relied on it without human oversight, that's your problem. So more or less the liability flows downhill. It will land on whoever certified the output.

The Pricing Problem Nobody Has Solved… Yet

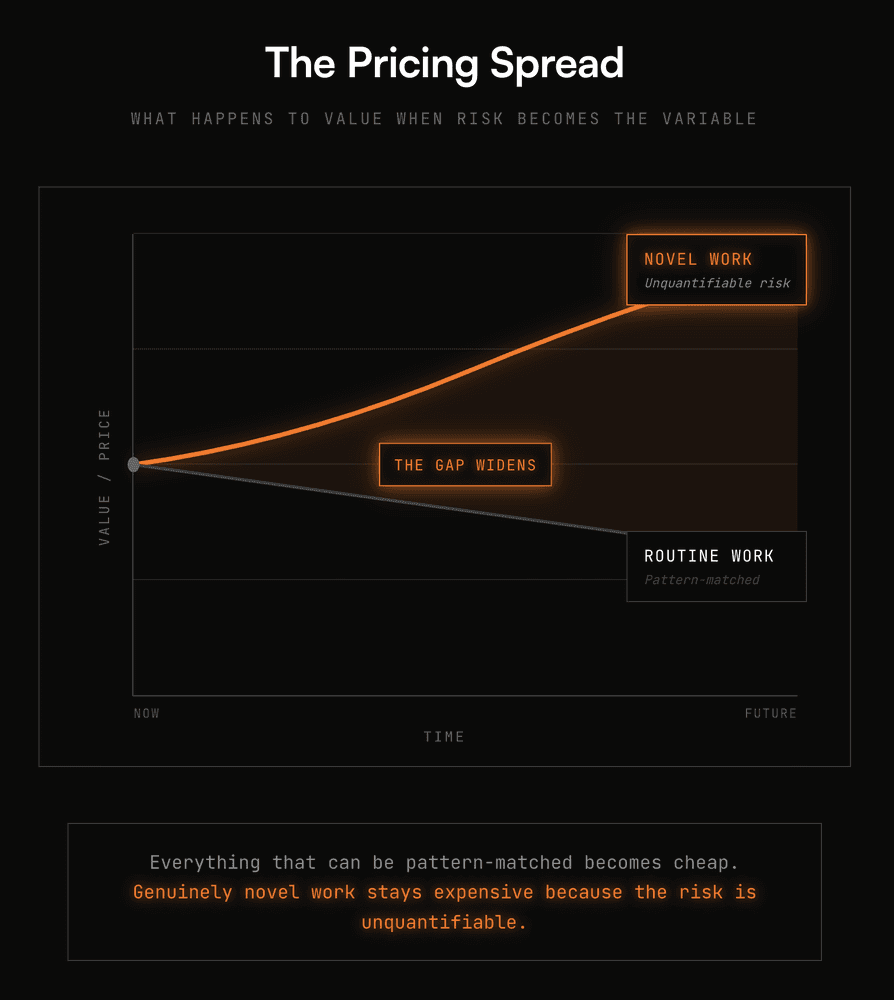

Here's where I think it gets uncomfortable. Insurance works because actuaries can model risk. They have decades of data on car accidents, house fires, medical claims. They know the probability distributions. Novel knowledge work has no historical data.

How do you price the risk of a new product failing in a new market? What's the actuarial table for an AI-generated legal strategy that's never been tested in court? You can't model it. The data doesn't exist.

I think this is the opportunity: genuinely novel work will stay expensive because the risk is difficult to quantify. No one can price it confidently, so the premium stays high. But everything that can be pattern-matched like routine contracts, standard code, templated designs will become shockingly cheap, commoditised and insurable. The spread between novel and routine work is about to widen dramatically.

I Think There’s Two Futures for Knowledge Workers

If accountability becomes the scarce resource, every knowledge worker ends up in one of two positions:

Risk Assessors: The people who evaluate AI output and decide whether to stamp it. They're the new experts. Not because they know more than the machine, but because they're willing to certify its work and carry the consequences. These are the people who have spent their entire career learning a deep specialisation and no longer need to execute.

Risk Pools: Think about why, for example, law firms exist at scale. It's not just brand, it's also the ability to absorb a bad outcome. If one engagement fails catastrophically, revenue from fifty other clients covers the loss. That's a risk pool. In a world where accountability is the product, I think size becomes a balance sheet question and not a headcount question.

So then individual practitioners without the capital to absorb liability become... what? Freelance reviewers working for the pools? Contractors who certify but don't carry risk? It feels like the economics of a small or solo practice are about to change.

The Question I Can't Answer

I don't know how to price this yet. I've spent nearly 20 years designing and building digital products, billing for time and expertise. The model worked because execution was hard and knowledge was scarce. Both are becoming abundant.

If I'm honest, I'm not sure what replaces it. Maybe it's outcome-based pricing with downside exposure like "we'll build it for $X, but if it doesn't hit Y metric, we refund or rebuild." This is already happening too, Intercom is doing this with their AI agent Fin: they charge 99 cents per resolved ticket, and if Fin fails, no charge. Or maybe it's retainer-plus-liability models. So, ongoing relationships where we essentially underwrite product decisions. Maybe it's something that doesn't exist yet?

What I do know: the value is shifting from what we make to what we're willing to guarantee. I think the companies that figure out how to price accountability will own the next era. The ones still billing for hours will wonder where the margin went.

So What Are You Actually Selling?

This isn't a rhetorical question. I'm genuinely asking. If AI can do 80% of what you do, and clients can access that directly, what's left?

My bet: it's your willingness to be on the hook. Your reputation staked on outcomes. Your capital backing your confidence. The question is whether you're ready to price it that way.