AI writes the articles. AI generates the images. AI produces the ad copy, the product descriptions, the emails. And now with Sora, Veo, Seedance and Runway, AI makes the videos too. Suno is churning out 50,000 new music tracks a day. Text was first then images exploded. And video and music are now right behind. In the middle of all of this, I found a conspiracy theory from 2021 that's becoming eerily prescient.

In 2021, an anonymous user called "IlluminatiPirate" posted a rambling essay on an obscure retro-computing forum. They claimed that the internet had effectively died around 2016. The idea was that most of the content we see was already bots talking to bots and that humans were now the minority.

The Atlantic even covered it and said it was "wrong but feels true." Everyone nodded and moved on…

But then ChatGPT launched. Then Midjourney. Then Sora. And I've started wondering: in an online world increasingly dominated by generated content, does human authenticity actually cut through? Do the numbers show it converts better? Or does it just drown in the noise with everything else?

So I went looking for numbers. What I found was... complicated.

The numbers, medium by medium

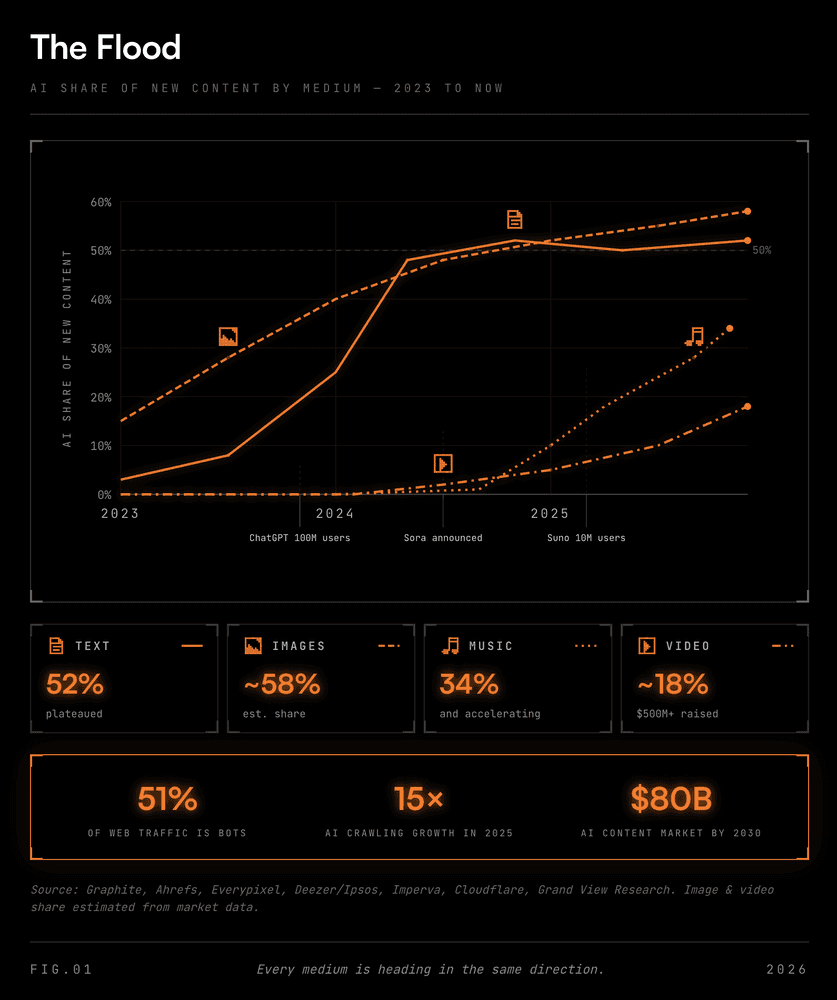

Text was first. In early 2025 Graphite tracked 65,000 English-language URLs from CommonCrawl and found more than half of new web articles crossed the AI-generated threshold in November 2024. That share has plateaued near 50% since May 2024, which surprised me. It's not accelerating any more but just stuck at half. In mid 2025 Ahrefs went broader with 900,000 pages. They found 74% had detectable AI content, but worth mentioning that only 2.5% was "pure AI." The rest was human-AI mixed, which includes anything touched by Grammarly.

Then images. In about eighteen months, more than 15 billion images were generated by AI. To put that into perspective, that's the same as every photograph taken in the first 150 years of photography. A century and a half of cameras, matched by a year and a half of prompts. And this was late 2023.

Video is where the money is going right now. AI video startups have collectively raised over $500 million since January 2025. And they're not just building generative video models, they're building content platforms. TikTok built Symphony, a full AI ad creation suite that turns a product URL into a finished video ad in minutes. There's no camera, talent or script. The ads are fully generated. There's also now at least eight AI UGC video generators designed specifically to produce fake "user-generated" content for TikTok ads. The broader generative AI content market was $14.8 billion in 2024. It's now projected to hit $80 billion by 2030.

Music is the one that scares me most. Deezer did a great survey with Ipsos tracking the explosive growth real time. In January 2025 on Deezer, 10,000 AI-generated tracks were uploaded daily. 10% of all uploads. By April it was 20,000 a day at 18%. By November, 50,000 a day at 34%. A 400% increase in ten months. And they even tested whether users could tell. 97% could not. 9,000 people. Eight countries. AI songs are now even charting on Billboard…

And advertising? American Eagle saw 60% higher ROAS with AI-generated ads. Halara hit 70% lower cost-per-acquisition. But the key thing here is it wasn't because the AI creative was better. Because the bottleneck in TikTok advertising was never distribution. At $3-5 CPM, reaching 100,000 people costs a few hundred dollars. The bottleneck was producing the 20-30 video variations you need to find winners. What I am seeing with generative advertising is that AI doesn't make better ads. It just makes more of them, faster.

And underneath all of the generated content, bot traffic overtook human traffic in 2024. 51% automated. AI crawling grew 15x through 2025.

So right now text is past 50%, music uploads at 34%, 15 billion AI images in 18 months and video tools just raised half a billion. Every format is heading in the same direction.

How did this happen so fast?

I suppose the same reason it's happened every time in history when a new technology works. Costs collapsed.

Some numbers. A 1,000-word article from GPT-4o-mini costs about $0.001. A tenth of a cent. A full song on Suno costs about $0.05 on their paid plan. A TikTok ad video from Symphony? Free. An AI-generated "UGC" product review video is a few cents per video! Compare any of those to hiring a writer ($100-500), a musician ($500-2,000 for custom composition), a video production team ($5,000-50,000), or a UGC creator ($200-500 per video). Unfortunately the economics aren't even close, it's obvious which way this goes.

ChatGPT has over 800 million weekly active users now, pushing toward 900 million by the end of 2025. Suno hit 10 million users in under two years. The barrier to mass content production across every format is basically gone.

But we have detection tools right? As it turns out, they're flaky and never really worked. University of Maryland researchers found that telling AI text apart from human text becomes "increasingly difficult, if not impossible" as the models improve. A Brock University study put human detection of AI text at a 24% true positive rate. I mean that's worse than guessing... For music, 97% of listeners couldn't tell the difference. For video, we're not even bothering to measure yet because the tools are improving too fast.

You can't filter what you can't spot. And right now, it seems we can't spot much.

The systems meant to manage this aren't keeping up

It would seem the search engines are losing. Researchers from Leipzig, Weimar, and ScaDS.AI spent a year watching Google, Bing, and DuckDuckGo fight AI spam. Their conclusion was pretty clear:

Search engines "seem to lose the cat-and-mouse game that is SEO spam."

Google's March 2024 update knocked AI content in results from 8.48% down to 7.43%. But by July 2025 it had bounced back to 19.56%. An all-time high. The filters aren't working.

And here's an alarming thought if you're a heavy user of AI. A Nature paper from July 2024 showed that when AI trains on AI-generated data, outputs get progressively worse. It's a downward slope of opinion homogenisation. Minority viewpoints disappear first. Then variety. Then coherence. The researchers called it "Habsburg AI." Every training cycle compounds the errors. Which means the flood isn't just big. It's getting worse over time. Weirdly though, that might actually help human creators. If the machine output keeps degrading, the gap between generated and human-made just gets wider.

Sam Altman posted on X in September 2025:

"i never took the dead internet theory that seriously but it seems like there are really a lot of LLM-run twitter accounts now."

The guy who runs the company most responsible for this, saying it out loud. The replies were all ChatGPT-style parodies. Of course they were.

Ok, down to brass tacks. Does authenticity actually cut through?

This is the question I started with. If everything is AI, does being real even help? Or does it just drown in the noise with everything else?

The short answer is yes. Emphatically.

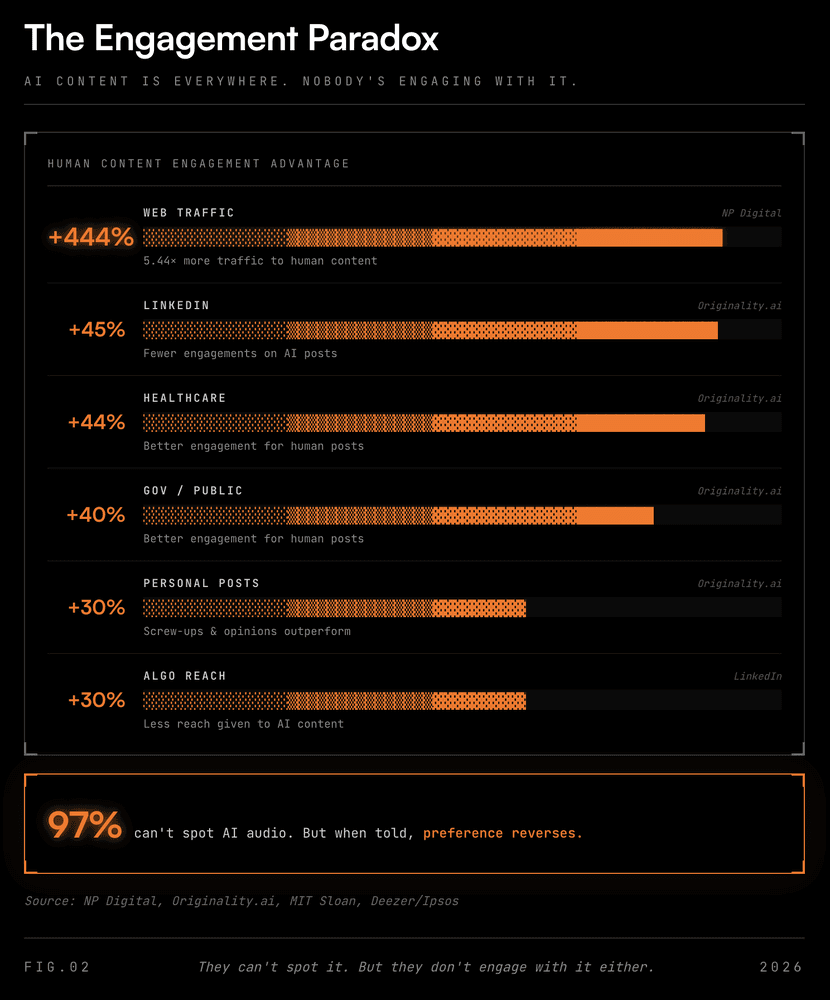

People can smell AI content. Maybe not consciously. But the engagement numbers don't lie.

AI-generated LinkedIn posts get 45% fewer engagements than human-written ones. In healthcare? Human posts see 44% better engagement. Government and public affairs? 40% better. LinkedIn's algorithm has also started penalising obvious AI content with 30% less reach. The platform itself is pushing back despite adding AI post improvement features.

NP Digital found human content earns 5.44x more traffic than AI material. Five times! An MIT Sloan study also ran an experiment and people actually preferred AI content in blind tests. But when they found out an AI wrote it, that preference dropped off significantly. The researchers called it "human favoritism." We want to know that a person made it. Good content alone apparently isn't enough.

When AI makes content free, supply of generic stuff approaches infinity. Value goes to zero. Basic economics. What doesn't go to zero? Knowledge earned through failure. The willingness to be wrong in public. Posts about personal screw-ups and unpopular opinions get up to30% more engagement on LinkedIn. Because a model can't generate that. Proof you were actually there.

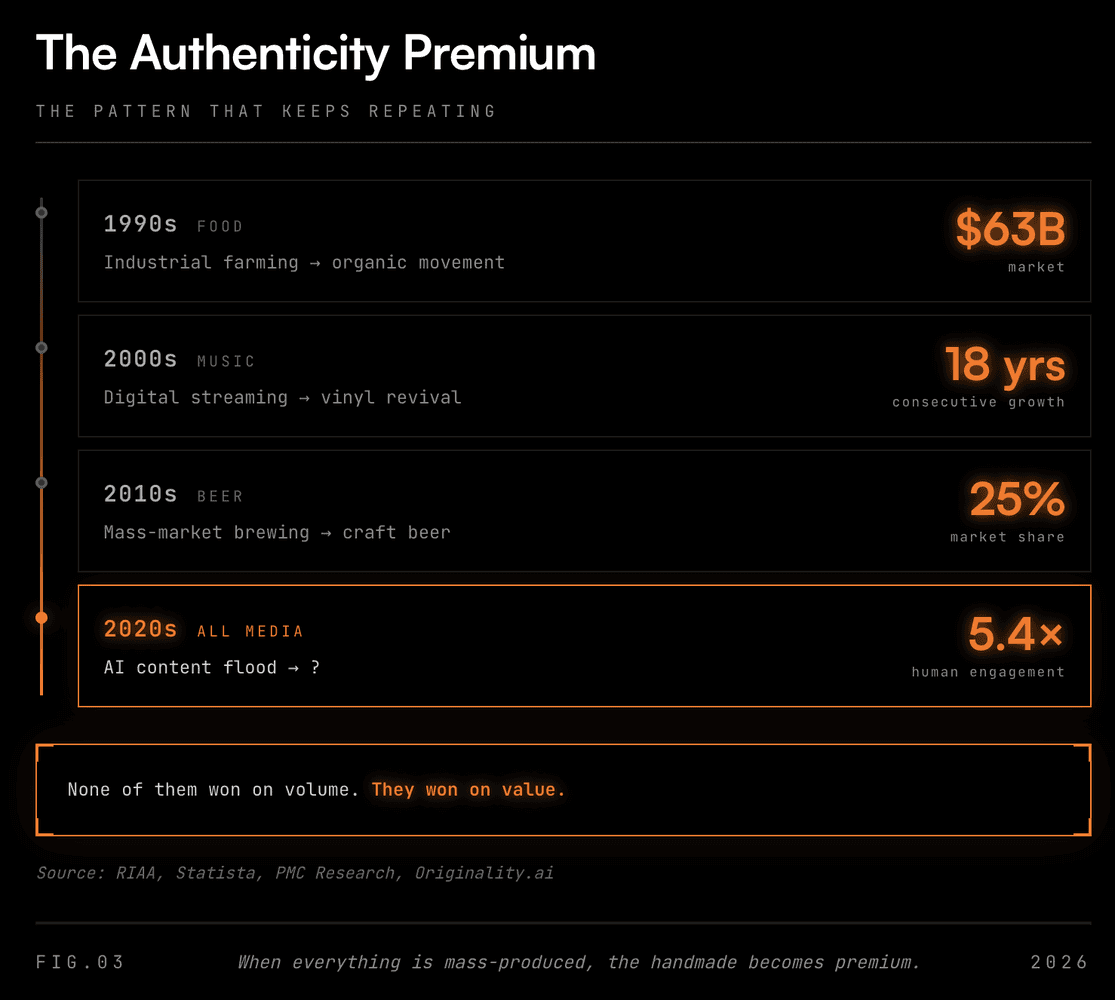

And this keeps happening. Every time production gets cheaper and more uniform, a counter-movement shows up.

Vinyl records. 18 consecutive years of sales growth. Under a million units to 43.6 million. $1.9 billion globally. And the interesting part for me is that Gen Z is driving it. Digital natives buying physical records. Vinyl outsold CDs by revenue for the first time since the 1980s. Nobody needs vinyl and that's the whole point, it's preferable because it's human tangible.

Craft beer. Researchers found people rated craft beer higher than mass-produced, and the authenticity signal influenced perceived quality beyond what blind taste tests showed. People literally thought it tasted better because they knew a smaller operation made it.

Organic food. Same pattern. Industrial production, consumer backlash, certification standards, mainstream adoption at a premium price.

Vinyl still outsells CDs by revenue but it's a fraction of streaming. Organic food is mainstream but most food is still industrial. Craft beer has 25% market share but Budweiser is still Budweiser. These movements never replaced the dominant thing. They just carved out a premium lane where people pay more because they trust where it came from.

What's being built to fix this

If the internet is being flooded by generated content, what's being done to verify authenticity? Currently I think C2PA is the most interesting attempt. The Coalition for Content Provenance and Authenticity embeds cryptographically signed "Content Credentials" into media files. Kind of like a nutrition label for digital content. Adobe, BBC, Microsoft, and Truepic started it. Google, OpenAI, Meta, and Amazon have since joined. It's on track to become an ISO standard.

Governments are moving too. The EU AI Act mandates machine-readable labels on AI content by August 2026, with penalties up to 3% of global turnover. In the US 47 states now have deepfake legislation. China has mandatory AI labels. And lastly Meta tagged 360 million AI generated posts in a single month.

But I'm skeptical any of this solves it. Google's SynthID has marked 10 billion pieces of content, which sounds impressive. But as the EFF has argued, AI watermarking schemes are fundamentally fragile. Most have proven easy to remove, and a state-sponsored disinformation campaign isn't going to politely watermark its output. Regulation will help around the edges but it's certainly not going to fix the core problem.

So does anyone actually care?

The Dead Internet Theory got the diagnosis right. More than half of new articles are machine-generated. A third of new music is synthetic. 15 billion AI images in 18 months. But the theory missed what happens next. When the default becomes artificial, being real becomes the scarce thing. Everyone's zigging toward automation. The value is in the zag.

But here's what bothers me. The engagement data is clear right now. Human content wins. But 97% of listeners already can't spot AI music. Video is months away from the same threshold. Text is probably already there for most readers. If you assume any rate of improvement, and we know the rate is steep, how long before the gut feeling stops working?

Maybe that's the wrong thing to rely on anyway. There's another version of authenticity that doesn't depend on gut feeling or detection tools. It's structural. Someone willing to put their name on the work. Someone carrying the liability if it's wrong. I recently wrote about how AI is unbundling all knowledge work, and the piece that survives is accountability. The willingness to be on the hook.

When everything in the feed could be generated, people stop asking "did a human make this?" They start asking "will someone stand behind it?" And that question works regardless of whether anyone can spot the difference. It just needs someone with skin in the game.

So what I learnt was that the authenticity premium is real today. But whether it lasts is a genuinely open question. But the accountability premium? I think that one sticks.